Lensa reignites discussion among artists over the ethics of AI art

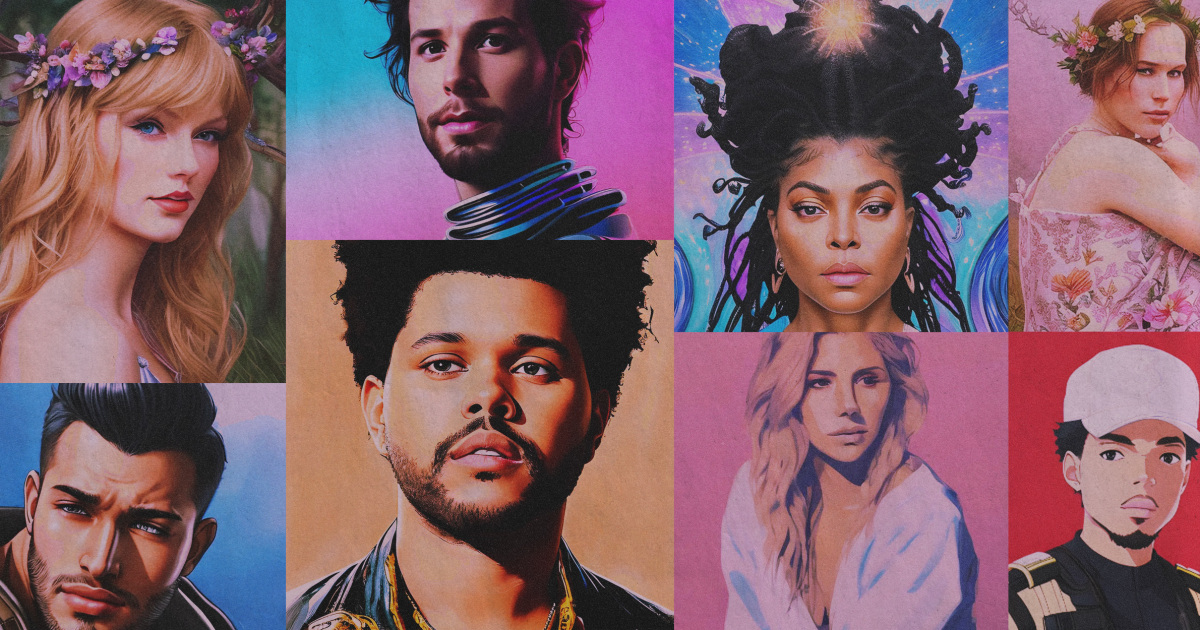

For a lot of on the internet, Lensa AI is a cheap, obtainable profile photograph generator. But in digital artwork circles, the level of popularity of synthetic intelligence-created artwork has elevated key privateness and ethics considerations.

Lensa, which introduced as a photo editing application in 2018, went viral previous thirty day period following releasing its “magic avatars” characteristic. It utilizes a minimal of 10 consumer-uploaded images and the neural community Secure Diffusion to create portraits in a range of digital artwork designs. Social media has been flooded with Lensa AI portraits, from photorealistic paintings to far more abstract illustrations. The application claimed the No. 1 place in the iOS Application Store’s “Photo & Video” category before this thirty day period.

But the app’s advancement — and the increase of AI-generated art in recent months — has reignited discussion about the ethics of creating illustrations or photos with products that have been skilled working with other people’s authentic get the job done.

Lensa is tinged with controversy — various artists have accused Stable Diffusion of using their artwork devoid of permission. Numerous in the digital art house have also expressed qualms over AI styles making visuals en masse for so affordable, especially if individuals photographs imitate styles that precise artists have put in yrs refining.

For a $7.99 company price, people obtain 50 unique avatars — which artists claimed is a portion of what a one portrait commission commonly expenses.

Providers like Lensa say they’re “bringing art to the masses,” said artist Karla Ortiz. “But actually what they are bringing is forgery, art theft [and] copying to the masses.”

In an e-mail to NBC News on Wednesday, Prisma Labs CEO Andrey Usoltsev clarified that bringing artwork to the masses “was hardly ever component of the firm’s mission” and said that the “democratization of access” to technological innovation like Steady Diffusion is “very an outstanding milestone.”

“What was the moment available only to techy perfectly-versed people is now out there for certainly everybody to appreciate. No precise abilities are needed,” Usoltsev claimed.

“As AI technology becomes increasingly extra advanced and accessible, it is most likely that we will see AI-run equipment and attributes becoming broadly built-in into shopper-facing applications at a immediate scale. We might like to be a aspect of this ongoing dialogue and steer the use of this sort of know-how in a harmless and moral way.”

Prisma issued a prolonged Twitter thread on Tuesday early morning, in which it resolved issues of AI art changing art by actual artists.

The thread did not deal with accusations that a lot of artists didn’t consent to the use of their function for AI instruction.

“As cinema did not kill theater and accounting software hasn’t eradicated the job, AI won’t swap artists but can grow to be a great aiding resource,” the company tweeted. “We also think that the developing accessibility of AI-driven applications would only make gentleman-made artwork in its resourceful excellence much more valued and appreciated, considering the fact that any industrialization delivers far more price to handcrafted is effective.”

The business claimed that AI-produced illustrations or photos “can’t be described as specific replicas of any particular artwork.”

Usoltsev reported that he could not present even more comment pertaining to the “3rd celebration investigation and methodologies” utilised by Balance AI, which produced Steady Diffusion.

For some artists, AI styles are a inventive device. Several have pointed out that the versions are practical for building reference photographs that are or else tricky to find on-line. Other writers have posted about applying the versions to visualize scenes in their screenplays and novels. Even though the value of art is subjective, the crux of the AI art controversy is the appropriate to privacy.

Ortiz, who is recognized for building idea art for flicks like “Doctor Odd,” also paints fantastic art portraits. When she recognized that her artwork was involved in a dataset made use of to coach the AI design that Lensa takes advantage of to generate avatars, she mentioned it felt like a “violation of identity.”

Prisma Labs deletes consumer shots from the cloud expert services it takes advantage of to course of action the illustrations or photos after it uses them to educate its AI, the enterprise informed TechCrunch. The company’s consumer agreement states that Lensa can use the photographs, movies and other consumer information for “operating or increasing Lensa” devoid of compensation.

In its Twitter thread, Lensa stated that it utilizes a “separate design for every consumer, not a 1-size-suits-all monstrous neural community qualified to reproduce any deal with.” The firm also mentioned that each individual user’s photographs and “associated model” are completely erased from its servers as before long as the user’s avatars are created.

The actuality that Lensa employs consumer articles to further more train its AI design, as mentioned in the app’s consumer agreement, should really alarm the community, artists who spoke with NBC News mentioned.

“We’re finding out that even if you are applying it for your individual inspiration, you’re continue to teaching it with other people’s facts,” stated Jon Lam, a storyboard artist at Riot Video games. “Anytime people use it much more, this issue just keeps finding out. At any time any one takes advantage of it, it just gets even worse and even worse for everyone.”

Image synthesis types like Google Imagen, DALL-E and Secure Diffusion are trained working with datasets of millions of pictures. The styles study associations among the arrangement of pixels in an graphic and the image’s metadata, which commonly consists of textual content descriptions of the impression matter and artistic design.

The product can then crank out new illustrations or photos based mostly on the associations it has learned. When fed the prompt “biologically accurate anatomical description of a birthday cake,” for example, the model Midjourney produced unsettling photographs that looked like genuine health-related textbook content. Reddit buyers described the visuals as “brilliantly weird” and “like a little something straight out of a desire.”

The San Francisco Ballet even applied photographs created by Midjourney to advertise this season’s creation of the Nutcracker. In a press launch previously this yr, the San Francisco Ballet’s chief advertising officer Kim Lundgren claimed that pairing the regular dwell overall performance with AI-created artwork was the “perfect way to add an surprising twist to a vacation typical.” The campaign was commonly criticized by artist advocacy teams.

A spokesperson for the ballet stated the marketing campaign was a “likelihood to experiment with present-day technological equipment,” and that almost 30 people had been involved in creating it.

“In the spirit of Bay Space ingenuity, we attempted a little something new,” the spokesperson reported. “SF Ballet continues to be deeply linked to and proudly a component of the assorted creative communities of the Bay Region.”

Ortiz reported that photos like the kinds made use of in the San Francisco Ballet’s marketing campaign “glimpse so superior thanks to the nonconsensual info they collected from artists and the general public.”

He was referring to the Huge-scale Synthetic Intelligence Open Community (LAION), a nonprofit firm that releases free of charge datasets for AI investigate and improvement. LAION-5B, a single of the datasets used to train Steady Diffusion and Google Imagen, incorporates publicly offered illustrations or photos scraped from web pages like DeviantArt, Getty Photos and Pinterest.

Quite a few artists have spoken out against models that have been skilled with LAION because their artwork was applied in the established without their understanding or permission. When an artist utilized the web page Have I Been Educated, which permits end users to check if their illustrations or photos had been integrated in LAION-5B, she located her very own face and health-related information. Ars Technica noted that “thousands of equivalent individual medical document photos” were also involved in the dataset.

“And now we are struggling with the exact problem the music field confronted with sites like Napster, which was it’s possible manufactured with superior intentions or with no pondering about the ethical implications.”

artist mateusz urbanowicz

Artist Mateusz Urbanowicz, whose work was also provided in LAION-5B, explained that followers have sent him AI-produced visuals that bear hanging similarities to his watercolor illustrations.

It’s clear that LAION is “not just a analysis undertaking that an individual set on the internet for all people to appreciate,” he claimed, now that corporations like Prisma Labs are utilizing it for industrial products.

“And now we are dealing with the very same difficulty the music field confronted with internet sites like Napster, which was it’s possible built with superior intentions or without the need of considering about the ethical implications.”

The art and new music sector abide by stringent copyright guidelines in the United States, but the use of copyrighted content in AI is lawfully murky. Utilizing copyrighted material to train AI styles may slide underneath fair use legislation, The Verge claimed. It’s additional complex when it comes to the content material that AI versions deliver, and it’s tricky to enforce, which leaves artists with very little recourse.

“They just get almost everything simply because it is a legal grey zone and just exploiting it,” Lam reported. “Because tech usually moves quicker than law, and legislation is often attempting to catch up with it.”

Usoltsev asserted that Lensa is “fully GDPR and CCAP criticism.” To the ideal of his knowledge, he said, “the commercial use of the design does not represent any authorized violations.”

There is also minimal legal precedent for pursuing authorized action against professional items that use AI experienced on publicly obtainable substance. Lam and others in the electronic art room say they hope that a pending class motion lawsuit against GitHub Copilot, a Microsoft product that takes advantage of an AI system properly trained by public code on GitHub, will pave the way for artists to shield their work. Until finally then, Lam claimed he’s wary of sharing his operate on-line at all.

Lam isn’t the only artist apprehensive about putting up his art. After his current posts calling out AI art went viral on Instagram and Twitter, Lam reported that he gained “an frustrating amount” of messages from students and early vocation artists asking for guidance.

The world wide web “democratized” art, Ortiz reported, by permitting artists to promote their operate and link with other artists. For artists like Lam, who has been hired for most of his work for the reason that of his social media presence, putting up on the web is crucial for landing career options. Putting a portfolio of operate samples on a password-protected website does not assess to the exposure gained from sharing it publicly.

“If no one is aware of your art, they’re not going to go to your web-site,” Lam additional. “And it’s likely to be increasingly difficult for students to get their foot in the doorway.”

Incorporating a watermark may perhaps not be more than enough to defend artists — in a latest Twitter thread, graphic designer Lauryn Ipsum outlined examples of the “mangled remains” of artists’ signatures in Lensa AI portraits.

Some argue that AI art generators are no distinct from an aspiring artist who emulates another’s type, which has become a stage of rivalry within artwork circles.

Days after illustrator Kim Jung Gi died in Oct, a former game developer produced an AI product that generates photographs in the artist’s one of a kind ink and brush fashion. The creator reported the model was an homage to Kim’s function, but it been given immediate backlash from other artists. Ortiz, who was close friends with Kim, said that the artist’s “whole detail was educating people today how to attract,” and to feed his life’s operate into an AI model was “really disrespectful.”

Urbanowicz mentioned he’s fewer bothered by an real artist who’s inspired by his illustrations. An AI product, nonetheless, can churn out an image that he would “never make” and damage his model — like if a product was prompted to crank out “a retail outlet painted with watercolors that sells prescription drugs or weapons” in his illustration fashion, and the picture was posted with his name attached.

“If an individual makes artwork based mostly on my design, and will make a new piece, it is their piece. It is a little something they produced. They acquired from me as I discovered from other artists,” he continued. “If you kind in my identify and store [in a prompt] to make a new piece of art, it’s forcing the AI to make art that I don’t want to make.”

Lots of artists and advocates also question if AI artwork will devalue operate established by human artists.

Lam concerns that firms will cancel artist contracts in favor of more rapidly, less costly AI-generated pictures.

Urbanowicz pointed out that AI products can be properly trained to replicate an artist’s earlier do the job, but will by no means be capable to make the art that an artist hasn’t created still. Without the need of decades of illustrations to find out from, he reported, the AI pictures that seemed just like his illustrations would under no circumstances exist. Even if the foreseeable future of visible artwork is unsure as apps like Lensa AI develop into extra common, he’s hopeful that aspiring artists will proceed to go after professions in resourceful fields.

“Only that particular person can make their special artwork,” Urbanowicz said. “AI simply cannot make the art that they will make in 20 decades.”

/cloudfront-us-east-1.images.arcpublishing.com/dmn/WGQE4QGMBRCSPES3NRZFXC47AE.jpg)